ETL | Mage.ai – Charts, Analysis, Testing, Overview, Cleansing

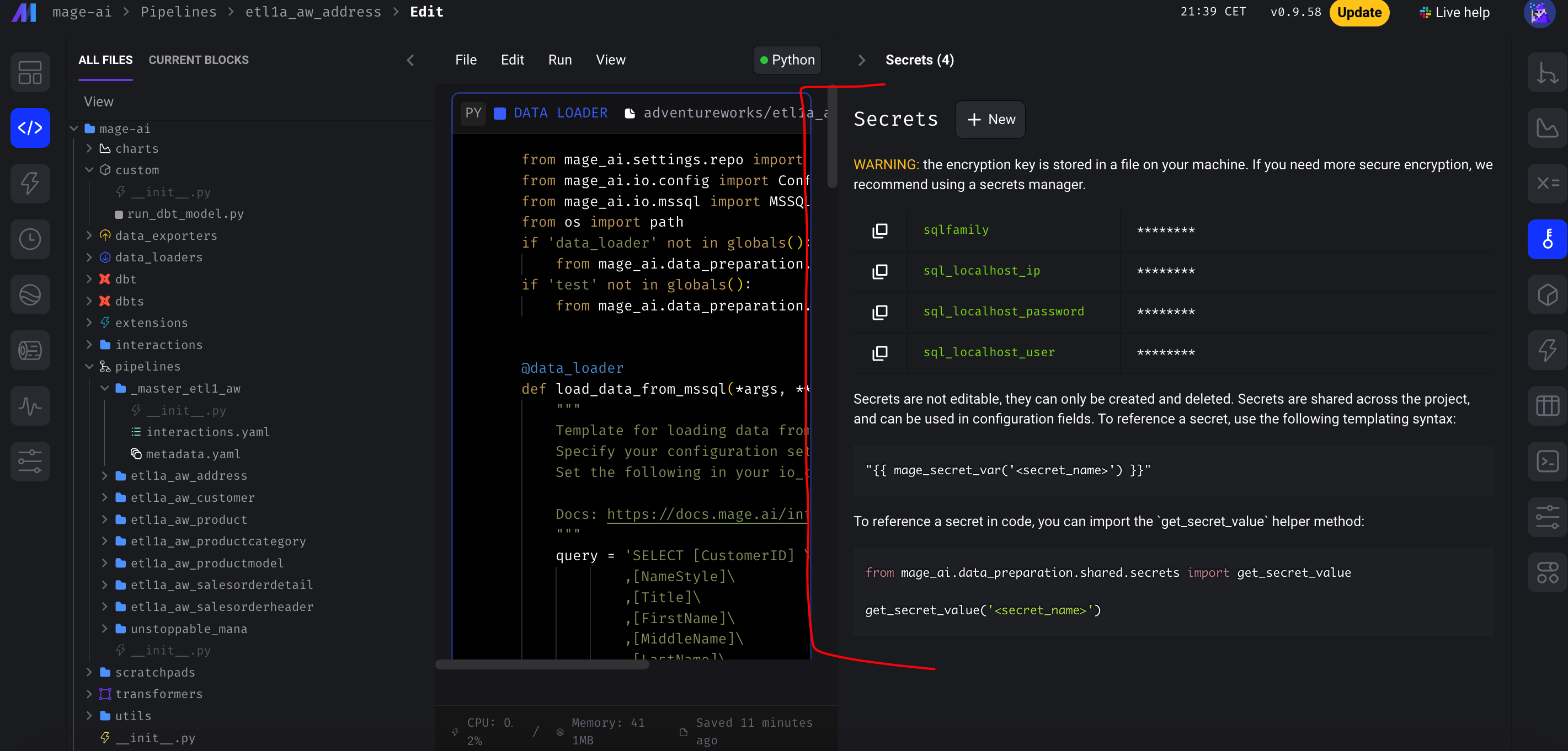

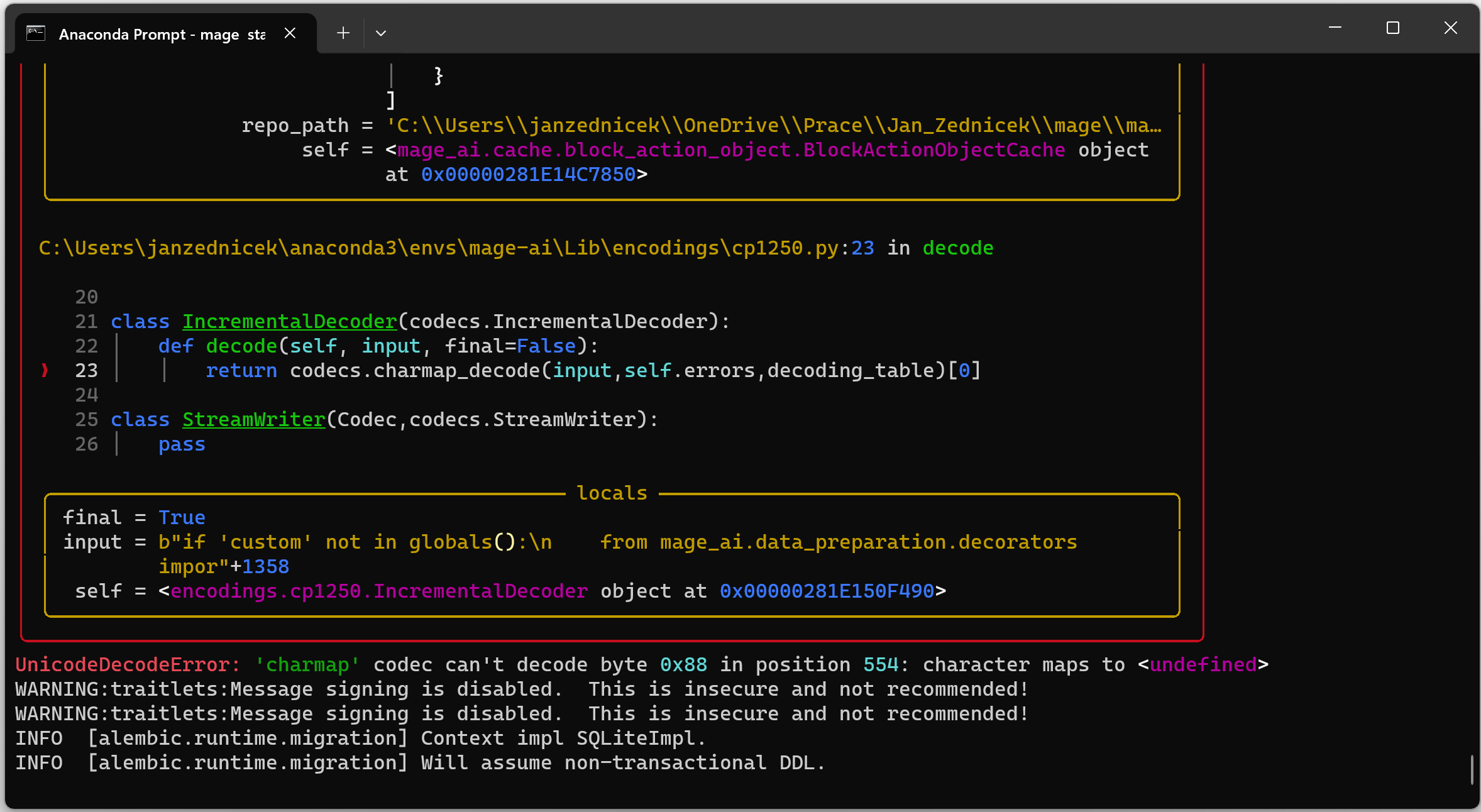

In this guide, we will take a look at the features that Mage.ai offers for data analysis. While this tool is primarily used for ETL pipelines, it also includes features for exploratory analysis, data statistics, and charts. With these features, you can perform initial data analysis within the tool. Mage offers a wide range of… Read More »

![[Errno 2] No such file or directory](https://janzednicek.cz/wp-content/uploads/2024/01/Screenshot-2024-01-25-at-23.41.32.png)