SQL Server offers 3 types of database recovery models: simple recovery model, full recovery model, and bulk-logged recovery model.

The recovery model defines how SQL Server manages transactions and transaction logs and whether it stores or doesn’t store these data. Ultimately, this affects the options available for restoring data from backups. Each database can have only one recovery model, but different databases across a SQL instance can have different recovery models.

Simple Recovery Model

Each database has at least two files: the master file (.mdf) where data is stored and the log file (.ldf) where the transaction log is stored. When you perform a full or differential SQL backup on a database with this recovery model, you are backing up the .mdf file. In this case, you can only restore data to the point of the last backup. With this type of recovery model, you can perform only these two types of backups.

Transaction log backup is not possible, and you risk losing data from the time of the last full or differential backup. Simple recovery clears the transaction log after each transaction, so creating a restore point is not possible.

In some situations, however, it is better to opt for the simple recovery model, as it may not matter much. Your data may be easily reproducible, the database may be non-production, or there may be another reason.

How the Transaction Log Works in the Simple Recovery Model

In the Simple recovery model, data from transactions is not permanently stored in the .ldf file. This doesn’t mean that the transactions are not temporarily stored; they are. When a transaction is initiated, the transaction log is saved and waits until the transaction is completed. This behavior allows you to roll back data in case the transaction is interrupted to prevent data loss.

For example, during an update operation, if you click cancel in the middle of the transaction, the transaction log stores the data changes made during the update operation, making it possible to revert data to its original state upon user request.

Therefore, even with the Simple recovery model, you must manage (shrink) the .ldf file to keep it at a reasonable size, preventing it from growing too large. If you perform a resource-intensive operation on millions of records, the log file is likely to expand, and you should consider shrinking it. I personally schedule log file shrinks as part of a daily maintenance plan.

Example: Let’s consider a database named Temp, which has two files, .mdf and .ldf, both with a size of 8MB. The recovery model is set to SIMPLE.

After creating a table with BEGIN TRAN and inserting 100,000 records into it, we have a pending transaction. The transaction inserts records into the [dbo].[Test_Transakcni_Log] table, and we can see how it affects the transaction log.

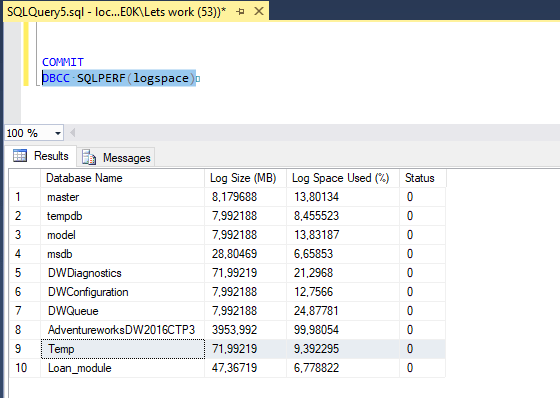

Using the Database console command DBCC SQLPERF(logspace), we can see that the size of the log file has increased to 72MB, with 43% utilization. This growth occurs because the increment is set to 64MB. What happens when we commit or roll back the transaction?

Committing the Transaction

After committing the transaction, we run DBCC SQLPERF(logspace) again, and we can see that the transaction log has almost cleared. If the recovery model were set to FULL, the data in the log would remain until a transaction log backup is performed or the data is not cleared from the log.